If you are running a large-scale e-commerce site on Magento, Shopify, or a custom React/Next.js headless setup, you’ve probably felt it. That cold shiver down your spine when you open Google Search Console and see the dreaded phrase: “Crawled – currently not indexed.”

For years, we were told that “Content is King.” We optimized titles, we bought backlinks, and we obsessed over keyword density. But in 2026, the game has changed. The King isn’t dead, but he’s being held hostage by something much more sinister: The Rendering Gap.

The 20th of March Syndrome

I recently looked into a case where a major international store saw its traffic fall off a cliff. It didn’t happen because of a Google Core Update. It didn’t happen because they lost backlinks. It happened because Googlebot simply stopped “seeing” the website.

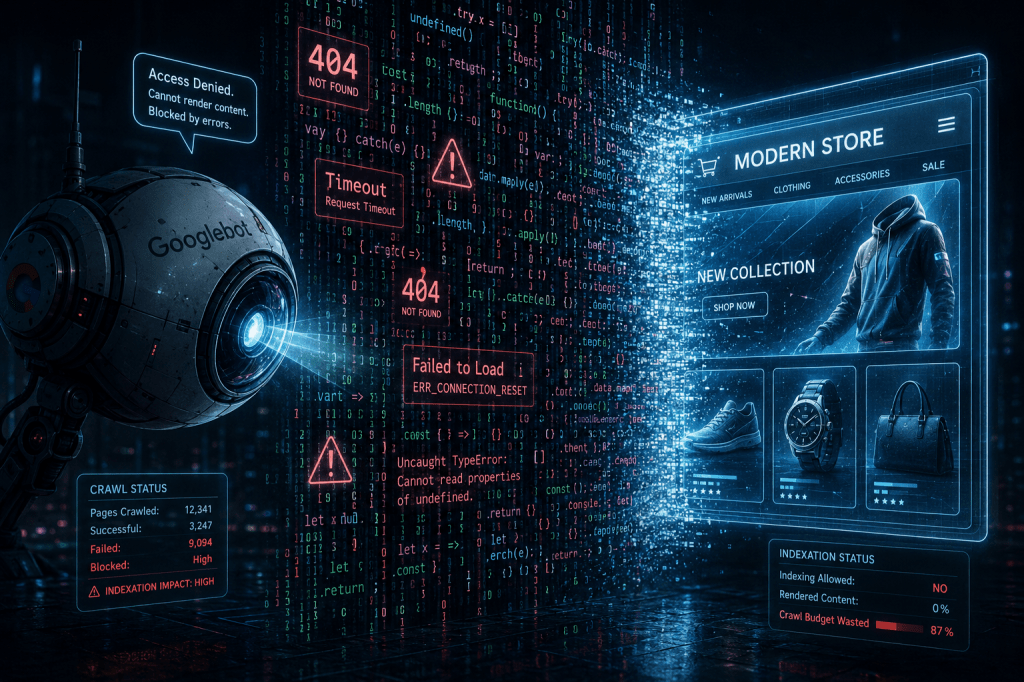

When we talk about SEO, we often imagine Google as a human reader who opens a page and reads the text. In reality, Google is a busy, resource-strapped algorithm. When it encounters a modern e-commerce site, it doesn’t just read; it has to build the site first. This is called rendering. And this is where most modern businesses are silently dying.

1. The Trap of “JavaScript Bloat”

The modern web is built on JavaScript. We want fast filters, beautiful sliders, and personalized recommendations (like Clerk.io). To do this, we pile script upon script. On a typical Magento store, you might find 200 to 300 individual JS files being requested during a single page load.

Here is the problem: Googlebot is a frugal guest. It doesn’t have the patience to wait for your 257 resources to load. If your server is slow, or if your CDN (Content Delivery Network) throttles the bot, Google simply gives up.

When Googlebot encounters a “Rendering Error” or a “Timeout” on your core JS files (like storage-manager.js or sidebar.js), it doesn’t see your product descriptions. It doesn’t see your price tags. It sees a white screen. A skeleton. To Google, that page is “thin content,” and thin content gets kicked out of the index.

2. The Sitemap “Ghost” Phenomenon

In the world of technical SEO, we often treat the Sitemap as a “set it and forget it” task. But for a search engine, the Sitemap is the only source of truth.

I’ve seen stores where three different SEO plugins were generating three different sitemaps. One was clean, another was full of old HTTP links, and the third was “poisoned” with URL parameters like ?___store=default.

When you feed Google conflicting maps, it stops trusting any of them. If your Sitemap is “dirty,” Googlebot will start guessing. It will wander into “Infinite Spaces”—sections of your site with endless sorting and filtering combinations—wasting your Crawl Budget on junk while your actual money-making products sit in the dark.

3. The “Crawl Budget” Myth vs. Reality

People often say, “I’m a small store, I don’t need to worry about crawl budget.” They are wrong.

Crawl budget isn’t just for Amazon. It’s about the ratio of Successful Fetches to Failed Resources. If Googlebot visits your site and 40% of the resources (scripts, CSS, images) return an error or take too long to load, Google marks your site as “unhealthy.”

Think of it like a restaurant. If you go there and the waiter tells you the kitchen is out of 10 items on the menu, you might stay once. If it happens three times, you stop going. Google is the most impatient diner in the world. If your server can’t deliver the JS files, Googlebot leaves and doesn’t come back for weeks.

4. The Canonical Conflict: Who is the Boss?

One of the most frustrating issues in modern e-commerce SEO is the “Canonical Mismatch.” You tell Google: “This is my main product page.” Google looks at your site, sees five different versions of that URL with different tracking parameters, and says: “No, I think the version with the ‘default store’ tag is the main one.”

Suddenly, your rankings disappear. Why? Because when Google ignores your Canonical tags, it treats your site as a mass of duplicate content. This isn’t a content problem; it’s a technical architecture failure.

How to Bridge the Rendering Gap

So, how do you fix a site that Google has decided to ignore? The answer isn’t “more content.” The answer is Technical Hygiene.

- Audit your JS Dependency: Do you really need 300 scripts to sell a pair of shoes? Use a tool like the GSC “Live Test” to see exactly which resources are failing. If your core layout depends on a script that is blocked by

robots.txt, you are invisible. - Kill the Ghost Sitemaps: Ensure you have one source of truth. If a sitemap isn’t in your Search Console, but it’s still on your server, Google will find it. And it will judge you for it.

- Optimize for the Bot, not just the User: We spend thousands on UX for humans, but 0 on “BX” (Bot Experience). If your server is configured to block high-frequency requests, you might be accidentally blocking Googlebot.

- Watch the Core Web Vitals (CWV) History: Don’t just look at today’s score. Look at the trend. A sudden drop in “Good URLs” is the first warning sign of a technical collapse. It’s the heartbeat of your site.

Final Thoughts

The era of “easy SEO” is over. We are now in the age of Technical Survival. If you are staring at a Search Console full of “Not Indexed” pages, don’t rush to delete your site or move to a new platform. The grass isn’t always greener on Shopify or WooCommerce.

Most of the time, the problem is a “silent killer”: a small technical conflict, a blocked script, or a messy sitemap that has spiraled out of control. Fix the foundation, and the rankings will follow.

Is your site actually visible to Google, or are you just hosting a very expensive “skeleton” for the bots? It’s time to check your rendering.

Leave a comment